A weekend making AI video, a 6-minute voice memo, and the dead internet we’re all building together.

I’m going to put my money where my mouth is. This entire post started as a rant into SuperWhisper — a voice-to-text app I love — with the plan to run it through a Claude pipeline I built and have it spit out something publishable. More on that process at the end. But first, let me tell you about a weekend I spent trying to make a short film with AI.

The Experiment

I’d been meaning to test the current crop of AI video generation tools. Not with a big budget — I didn’t want to burn through a pile of credits — so I kept the scope tight.

I’d seen a lot of what people are making with these tools, and most of it feels… spectacular. And I mean that literally — it’s spectacle. A kitten fighting Godzilla. Beautiful, surreal, directionless. There’s no human hand behind it other than the text prompt, and while I know that’s an oversimplification (there’s real craft emerging in this space), the technology still has this quality where the machine kind of misunderstands you at first. Which, conveniently, burns through your tokens. If you’re doing this professionally, you’re going to start measuring your marketing budget in tokens instead of crew hours. The landscape is already shifting — some hybrid role between old-school production, marketing, post, and a little web savviness is taking shape.

So rather than make another spectacle, I wanted to try something with a point of view.

Guerrilla Radio as a Template

These tools give you about five seconds per clip. That constraint reminded me of a short film format I’d seen — four shots, four seconds each, tell a story. I didn’t follow that exactly, but I went looking for a visual template and landed on Rage Against the Machine’s “Guerrilla Radio” video.

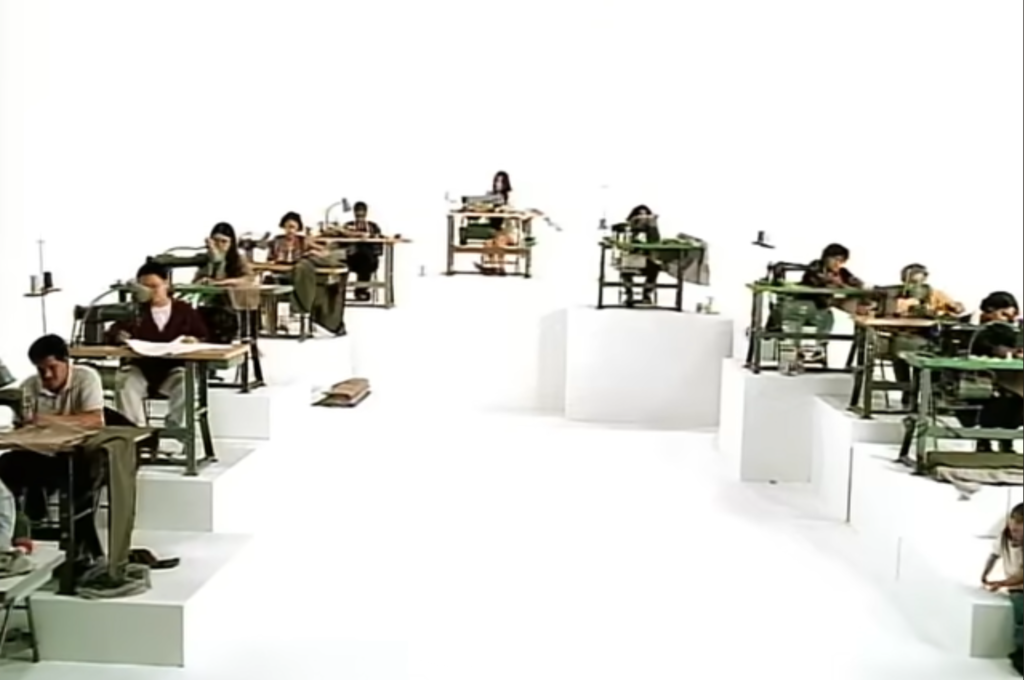

It opens with this incredible shot: rows of workers — all brown, all hunched over sewing machines — against a stark, sterile white background. They form this triangle pushing out toward the camera. Then it cuts to the band playing on a similarly blank stage.

I thought there were echoes worth chasing. The original video was talking about wages, outsourcing, sweatshops. And here we are again with a new kind of class stratification. Now it’s the laptop class’s turn to get annihilated. The people who thought they were safe behind a screen are watching AI come for their work in real time.

So I recreated that structure. But instead of cutting to the band, I cut to the people I think are the real arbiters of this AI moment. Jensen Huang, obviously. The big tech companies sitting on mountains of cash: Google, Apple, Microsoft, Meta. Because if the AI bubble bursts and the unit economics don’t work out for the smaller players, these are the ones left standing. They’re the band on the white stage.

The Technicals

At a tool level, I bounced between three platforms: OpenArt, ArtList, and Kling AI. FREE CREDITS, why not? I’d feed them reference images of that robot character you see in the final piece and then just prompt it with text — “turn this into a crowd,” that kind of thing. Pretty straightforward on that end.

The CEO shots were trickier. I took standalone photos of the tech CEOs — Jensen, Zuckerberg, the usual suspects — and needed to place them on that empty white stage from the original Rage Against the Machine video. So I removed the band, dropped the CEOs in, and then had to match the feet. That was honestly the hardest part. I’d feed ChatGPT a reference image of the Rage members’ feet and positioning so it could match the stance for each CEO. I did most of the generation through ChatGPT specifically because you don’t want to be typing names and likenesses into text-based generation tools . That stuff gets flagged. Having reference images to work from sidesteps a lot of those guardrails.

The group shot of all the tech CEOs together I mocked up in Photoshop. Then I brought everything into Premiere to cut and sequence the clips.

And honestly? The worst parts of the final video are my fault. The cuts, the push-ins. I did this linear zoom that should have been eased in. It looks stiff. I just didn’t have the time to finesse it. Because here’s the thing people don’t talk about enough: making stuff this way is still really time-consuming… compared to where this is headed. Think about what it replaces. Coordinating a crew. Renting a studio. Hiring extras. I did this over a weekend with free tools and a laptop. That gap is closing fast.. There isn’t a workflow right now, at least not one that isn’t absurdly expensive, where you can just generate a cohesive video end to end.

But I can see it coming. Something like Premiere building its own generative workspace. There’s ComfyUI, which popped into my world about four days ago, and it has these incredible node-based workflows that can generate different angles, find compositions, chain processes together. The potential for producing lower-cost content is obvious. Two weekends ago when I actually built this thing, that tooling wasn’t really there yet. The speed at which this space is moving is genuinely hard to keep up with.

So to sum it up: a lot of reference images, some Photoshop compositing, basic Premiere editing, and a willingness to show an AI a picture of a person with a laptop next to that original sewing machine shot and let it figure out the rest from a text prompt. That’s really all it took.

The Dead Internet Feeling

Here’s where my cynicism kicks in. I want to use AI for production — for content, and I say that word sarcastically. But if everyone is doing this, what are we actually building? We’re making AI-generated content so that other AI can read it, hoping that somewhere down the funnel a human actually sees it. It’s the dead internet theory playing out in real time.

Martin Scorsese said something when digital cameras went mainstream that I keep coming back to: there will be great creators in this space, but you’re going to drown in bad film for a while. That’s a pretty lucid take on what any new technology does. It makes things accessible. Some people who never had a shot will make something incredible. But there’s going to be a lot of trash first.

So in my little video, I kept cutting back to robots staring at billionaires. That felt about right. Draw your own conclusions.

The Pipeline (Or: How This Post Actually Got Made)

This whole thing — the rambling you just read in polished form — started as about six minutes of me talking into a microphone **plus the second recording we had . I ran the transcript through a Claude project I built that works in steps:

- It takes raw speech and rewrites it as a technical explanation from a specific perspective — in this case, a marketing person breaking down what I actually did and why.

- That explanation gets turned into a set number of paragraphs.

- Those paragraphs get simplified to a seventh-to-eighth grade reading level. Warren Buffett’s reading level. If it’s good enough for him, it’s good enough for me and whoever’s reading this.

I think building internal tools like this is one of the genuinely useful things AI offers right now. Not replacing the thinking — organizing it. Taking the messy, meandering version and giving it shape so you can decide what stays.

**pieces in highlight are places I had to do hand edits. Let me know if you think this piece is engaging or if it blows and you hated reading it. Maybe a 10 point scale system.

Leave a Reply